Tuesday, February 28, 2006

Monday, February 27, 2006

VoIP transition 'will take 20 years' - Computeract!ve

Bad news for Nuasis and others in the VoIP space. An industry expert from HP predicts it will take up to 20 years to complete the transition to VoIP from traditional POTS service. The industry hype has been predicting much quicker adoption each year for the past five years and we still aren't seeing it.

VoIP transition 'will take 20 years' - Computeract!ve

Posted by

dmourati

at

8:12 PM

0

comments

![]()

![]()

Thursday, February 23, 2006

MMA Global -

Here's a followup link to the great ABCs of Mobile Marketing series by Laura Marriott. Laura Marriott is executive director of the Mobile Marketing Association (MMA), which works to clear obstacles to market development, to establish standards and best practices for sustainable growth, and to evangelize the mobile channel for use by brands and third-party content providers. The MMA has over 250 members worldwide, representing over 16 countries.

MMA Global -

Posted by

dmourati

at

11:36 PM

0

comments

![]()

![]()

The ABCs of Mobile Marketing, Part 1

MASP and the Mobile Advertising Ecosystem:

The ABCs of Mobile Marketing, Part 1

Posted by

dmourati

at

11:36 PM

0

comments

![]()

![]()

Splunk Question and Answer

I got the following email yesterday, from a user named jrichardson regarding some blog posts I had made on my progress with Splunk.

Q:

Date: Wed, 22 Feb 2006 20:42:46 -0800 (PST)

From: jrichardson

To: dmourati@linfactory.com

Subject: [Dmourati Blog] 2/22/2006 08:33:17 PM

How did you configure the tailing processor in splunk?I'd like create

a "rule" for "Everything in /var/log/remote/$HOSTNAME/messages"

without having to create a seperate "file" tag for each remote

server.

--

Posted by jrichardson to Dmourati Blog at 2/22/2006 08:33:17 PM

A:

Jrichardson,

You found a weak spot in my blog posts, namely that I haven't figured out how to have blogger correctly represent embedded xml like the configuration elements from my config.xml. Sorry about that. To answer your question, I've been using the TailingProcessor in a one-to-one mapping to my log files. For example, I have (XML below intentionally mucked to avoid processing by blogger):

fileName/var/log/remote-syslog-ng/demetri04/messages /fileName

And another config stanza containing:

fileName /var/log/remote-syslog-ng/demetri05/messages /fileName

To your point, what if I had demetri01-demetri99 that I wanted to configure? I can think of two options, neither of which is particularly attractive. The first is to use the Tailing all files in a Directory option. The restriction as defined in the docs is that all the files in the directory need to be of the same type. In order to accomplish this flat arrangement, you'd need some type of symlink structure to represent all logs of a single type in a flat directory. Somethink like:[root@demetri05 remote-syslog-ng]# cd splunk/

[root@demetri05 splunk]# ls -lah

total 8.0K

drwxr-xr-x 2 root root 4.0K Feb 23 01:15 .

drwx------ 5 root root 4.0K Feb 23 01:15 ..

lrwxrwxrwx 1 root root 21 Feb 23 01:15 demetri04-messages -> ../demetri04/messages

lrwxrwxrwx 1 root root 21 Feb 23 01:15 demetri05-messges -> ../demetri05/messages

Not particularly elegant, but would probably work. The other solution that comes to mind is to generate the config.xml file programatically. I use perl's XML::Simple quite a bit for straight forward XML generation/parsing. That might be a bit more work at first but probably more flexible.

The only other option that comes to mind is to skip the tailingprocessor and go with the directory monitor plus some cron job or some such to pump data in. I doubt I'd go that route, however. Probably the perl or other programatic method is the best.

Posted by

dmourati

at

12:48 AM

0

comments

![]()

![]()

Sunday, February 19, 2006

Splunk Integration with JBoss

I'm doing some work now to integrate Splunk with JBoss. This is fun stuff and somewhat interesting as application server monitoring and troublehsooting is one of my many areas of focus. To get started, I setup a Linux box running RHEL 3 and Splunk 1.1. The box is named demetri05.

[root@demetri05 root]# cat /etc/redhat-release

Red Hat Enterprise Linux ES release 3 (Taroon Update 6)

Next, I went to jpackage.org to get a binary install of the JBoss application server. This was a bit tricky, but not too bad. Primarily my problem was that demetri05 doesn't have access to the internet so I couldn't rely on the recommendation to setup a yum mirror to up2date. Instead, I had to download the RPMs one at a time from another workstation and build up a group of RPMs that would internally consistent meaning there were no failed dependencies. This was not fun but fairly straight forward. The final list looks like this:

[root@demetri05 root]# cd jboss-install/

[root@demetri05 jboss-install]# ls

crimson-1.1.3-13jpp.noarch.rpm servletapi4-4.0.4-3jpp.noarch.rpm

gnu.getopt-1.0.10-1jpp.noarch.rpm xalan-j2-2.7.0-1jpp.noarch.rpm

gnu.regexp-1.1.4-9jpp.noarch.rpm xerces-j2-2.7.1-1jpp.noarch.rpm

jakarta-commons-logging-1.0.4-2jpp.noarch.rpm xml-commons-1.3.02-2jpp.noarch.rpm

jboss-3.0.8-4jpp.noarch.rpm xml-commons-apis-1.3.02-2jpp.noarch.rpm

jpackage-utils-1.6.6-1jpp.noarch.rpm xml-commons-resolver-1.1-3jpp.noarch.rpm

log4j-1.2.12-1jpp.noarch.rpm

Two other RPMs were required, but these were both part of the Red Hat distribution as opposed to the jpackage repository. They were ant anc bcel. Those in turn had dependencies, but up2date resolved them for me without issue.

After installing JBoss on my system, I need to point Splunk to the default log file, in my case this was /var/log/jboss/default/server.log. Splunk offers many methods for monitoring log files, the one that was most appopriate for me with the TailingProcessor. The TailingProcessor has the advantage of working with open files. This means that as JBoss adds information to the log file, Splunk is continuously pulling in that same data automatically. This makes things easier. For more info on the TailingProcessor, pleaes visit the Splunk documetation here:

Splunk Docs

To make my change, I needed to edit the config.xml file located here:

[root@demetri05 root]# cd /opt/splunk/etc/modules/tailingprocessor/

[root@demetri05 tailingprocessor]# ls

config.xml

Here is the relevant addition, Blogger, please be nice to my XML:

/var/log/jboss/default/server.log

$$SPLUNK_HOME]]/etc/myinstall/dynamicautogenericpipeline.xml

demetri05

Initially, I made a mistake by copy-pasting the "" end tag in addition to the

[root@demetri05 tailingprocessor]# /etc/init.d/splunk restart

splunkSearch is not running. [FAILED]

splunkd is not running. [FAILED]

== Checking prerequisites...

Version is Splunk Server

Checking http port [8000]: open

Checking https port [8001]: open

Checking mgmt port [8089]: open

Checking search port [9099]: open

== All checks passed

Starting splunkd [ OK ]

Starting splunkSearch [ OK ]

Now, lets take a look at what Splunk sees in this new log file. Hmm, still not working. Okay, I've found one more typo "FileList" instead of "filelist." That's annoyng but easy to fix. One more time for good measure:

[root@demetri05 tailingprocessor]# /etc/init.d/splunk stop

Stopping splunkSearch... [ OK ]

Stopping splunkd. This operation can take several minutes, [ OK ]e patient...

[root@demetri05 tailingprocessor]# /etc/init.d/splunk start

== Checking prerequisites...

Version is Splunk Server

Checking http port [8000]: time_wait

Waiting for port to reopen ........ done

Checking https port [8001]: open

Checking mgmt port [8089]: time_wait

Waiting for port to reopen ...................... done

Checking search port [9099]: open

== All checks passed

Starting splunkd [ OK ]

Starting splunkSearch [ OK ]

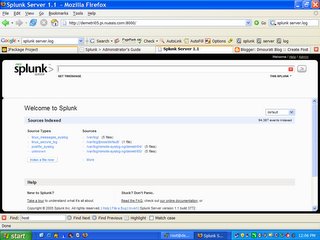

Looks good, lets see what happened on the front end:

Success, note the line that starts /var/log/jboss/default. This is the new file/directory I added to TailingProcessor.

Looking at my spunk server, I see I have 350 events (and growing!) from the source file /var/log/jboss/default/server.log. Now I need to put some information around these events to clarify exactly what event is, how it was generated, and in general, what to make of it. This is not easy so I'll take it in stages.

The first thing I did was to pump a bunch of this data to splunk base. This was easy to do by clicking on Check splunk.com nex to the event in question. In turn, this uploads the event to splunk base for capture and analysis. I need a scalable way of doing this, maybe I just haven't found it/figured it out yet.

Now, I'm well into a phase where I've added some details surrounding my eventgs. One thing I'm doing is "Renaming Source Types." I'm looking through SplunkBase here splunk bin here:

All Your Base are Belong to Splunk!

SplunkBase

I'm also looking at some very similar information recently posted by a another SplunkBase user named Peter Dickten. Nice job Peter!

Now, here's more on my progress. I have a user profile up here:

http://www.splunk.com/user/dmourati

This will show the evens I'm currenlty managing. I've also found some stuff here I did previously, mainly from the OS side but still useful context for my current assignment.

While the jboss install I have going on my demetri05 box is fairly vanilla I'd still like to do some nasty stuff there and try to break it to generate new and perhaps more interesting logs.

Meanwhile, I've found two more log files I want to suck in to splunk. They are both in the /var/log/jboss/default/ directory and they are called boot.log and date.request.log. This presents a challenge for me wrt the splunk configuration as the only files I have currently configured do not have a dynamic name such as the request.log. I can think of a few ways to solve this, but for the moment, I'll just put today's file in there, 2006_02_19.request.log and figure the harder part out as I get the chance.

So, I need to edit my config.xml again. This time I'll be more careful esp with the syntax. \I'll eventually need some kind of library to modify and verify config.xml as it evolves. Perl's xml simple comes to mind but I'm not going there now.

I've re-edit the relevant config.xml for tailing files and restarted splunk via the sysvinit script. Now, lets' reinvestigate the front end to see whether it has picked up my two new log files.

For some reason, I can't get splunk to take these two new files. The reason maybe that files are non-standard format. I'll have to look more into this. For now, I'm going to try the directory option and simply copy the files to be pulled in on the fly.

It turns out the problem was a mis-edit on my config.xml. I had to track this down by tailing the splunkd.log file. (Seems like Splunk should be able to figure this problem out on its own, no?)

Once that was fixed, I started seeing new events making there way into my splunk index.

I started uploading events to splunk base by going back through check splunk.com links. Eventually, I added some "meat" to each of the Event Types by putting in some basic information about what the event was and where it came from. I then put a link back to the JBoss project page for additional info. Based on what I've been hearing maybe I should have also put a link to Oracle's home page instead? Sphere: Related Content

Posted by

dmourati

at

11:48 AM

1 comments

![]()

![]()